WWVB and the

Spectracom 8206 Antenna

I have a Spectracom model 8206 antenna mounted on the roof of my house,

facing roughly broadside to Fort Collins, Colorado. The 8206 is a ferrite

loop antenna with a built-in preamplifier. The antenna gets DC power via

the coax from the attached Spectracom WWVB receiver. I also tested an

AMRAD active antenna; here are the results for

the AMRAD antenna.

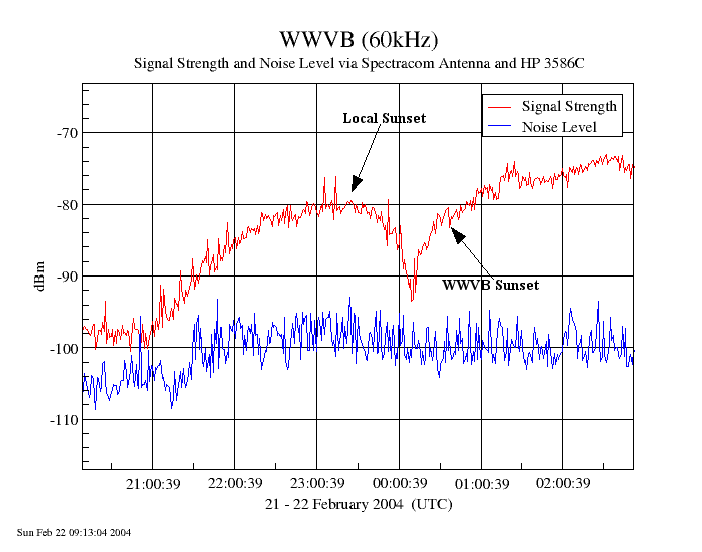

I wanted to see how strong the WWVB signal was here, so I set up a test using an HP 3586C selective voltmeter and some Linux-based GPIB control software I wrote to adjust the receiver and take signal strength readings as well as noise level measurements. Here are the results...

Full Run

Signal-to-Noise Ratio

24 Hours

Sunrise

Sunset

Some Thoughts

I'm surprised to see how unstable the signal strength is during the daytime; my phase plots always show less noise during the day, but it seems that the amplitude doesn't act in the same way. Perhaps the short days we have even in late February don't give enough time for the daytime propagation to fully stabilize.Here are the pertinent geographic details:

- My location: Dayton, Ohio, at 39 42 48.8N, 84 10 20.3W.

- WWVB location: Fort Collins, Colorado at 40 40 28.3N, 105 02 39.5W.

- Great circle distance: 1100.25 miles (1770.67 kilometers).

- Bearing from Dayton to Fort Collins is 280.19 degrees.

- February 21 sunrise in Dayton was 0722 EST (1222 UTC) and sunset was 1819 EST (2319 UTC).

- February 21 sunrise in Fort Collins was 0647 MST (1347 UTC) and sunset was 1742 MST (0042 UTC).

Test Methodology

The antenna was connected to a Spectracom 8164 receiver in order to provide power for its preamp. A tee connector brought the signal, through a DC block, to the HP 3586C selective voltmeter. Because I have very (don't believe me?) strong local AM signals to contend with here, I put a low pass filter with a 500kHz cutoff ahead of the 3586C. The low pass filter reduced the noise level by about 5dB without having any significant effect on the WWVB signal; it is not known whether the Spectracom receivers, with their much narrower input filters, would see the same difference. I used the 3586C's 20Hz bandwidth filter and autoranging in 10dB steps to generate signal readings with 0.01dBm resolution.The fact that the Spectracom receiver was simply put in parallel with the 3586C certainly caused some error in the absolute power readings, but this should be only a few dB and should be a constant offset.

Another uncertainty is the effect of WWVB's modulation. The station transmits a binary coded decimal timecode by dropping its carrier strength 10dB at the beginning of each second. A one is represented by a 500ms drop, a zero by a 200ms drop, and a marker pulse by an 800ms drop. My readings are not synchronized to the time tick (this is probably not possible with my hardware), so I don't know if any given reading was taken during full power, or during a 10dB dip. I am averaging readings taken over about 24 seconds per minute, and hopefully the modulation effects will largely be averaged out.

However, if the ratio of long "one" and short "zero" bits changes as the timecode marches forward, that would impact the probability of a sample being taken during the low-power interval, and thus impact the average power value. I haven't been able to find any reference on the 'net talking about the average duty cycle of the WWVB modulation, so this is an unknown factor.

I also wanted to see what the signal-to-noise ratio looked like. Since I couldn't just make WWVB go away to measure the noise at 60kHz, I had to improvise. Since the measurements are done in a 20Hz bandwidth and the filter used has very steep skirts, moving a small distance away from WWVB should show a representative noise level. To lower the impact of any departure from white noise (particularly the impact of a noise source on one side of the channel but not the other), I took readings 100Hz above, and 100Hz below, 60kHz and averaged them to get the noise result. (The bandwidth of the antenna system is 700Hz; measurement points at +/-100Hz are well within this range, yet far enough outside the 3586C filter skirt to yield reasonable results.

The chart of signal to noise ratios simply subtracts the noise level from the signal level at each data point on the other chart. Although looking at this noise information gives a sense of what's going on, the actual situation in the WWVB receiver is likely to be different due to its more sophisticated detection system. But how much different, I don't know...

A perl script running under Linux controlled the 3586C via its GPIB interface. Measurements were taken in this sequence:

- Run autocalibration routine.

- Tune to 60.0kHz, take 24 signal strength readings (at about one second intervals), and average the result to establsh the signal level.

- Tune to 59.9kHz, take 12 signal strength readings, and average the result.

- Tune to 60.1kHz, take 12 signal strength readings, and average the result.

- Average the 59.9kHz and 60.1Khz readings to establish the noise level.

- Log the time and the signal and noise values.

- Repeat.

The 3586C takes about one measurement per second, and calibration takes about three seconds. The sequence described above takes just about one minute, though there is sometimes a variation of a second or two. I plan to rewrite the program logic to use use an alarm to trigger a reading cycle precisely every 60 seconds, but that's not the case for this experiment.

Finally, the 3586C derived its frequency reference from an HP 5065A Rubidium frequency standard with a measured frequency offset of less than 1x10-11. So, we can be quite sure that WWVB was centered in the 20Hz passband.